Why the gap between people who use AI well and people who don’t is about to become the defining divide of the next decade.

I run three businesses. I use AI every day — for strategy, for drafting, for research, for operations. I’m not an AI skeptic. I build with it.

But a few months ago I caught myself doing something that should have been alarming and wasn’t: I was reviewing a competitive analysis that Claude had generated for one of my companies, nodding along, ready to forward it to my team. Good structure. Clean language. Solid-sounding recommendations.

Then I stopped. I realized I couldn’t explain why any of the recommendations were right. I hadn’t questioned a single assumption. I hadn’t cross-checked a single data point. I was about to send strategic guidance to my team based on work I hadn’t actually thought about. I’d read it, but I hadn’t processed it.

That moment stuck with me. And when I started digging into the research, I found out my brain was doing exactly what the neuroscientists predicted it would.

What MIT Found Inside Our Heads

Earlier this year, a team at MIT’s Media Lab strapped EEG sensors to 54 people and tracked their brain activity across four months of essay writing — some with no help, some with Google, some with ChatGPT.

The results were uncomfortable.

People who let ChatGPT handle the writing showed significantly weaker neural connectivity in the brain regions tied to attention, planning, and working memory. Their prefrontal cortex — the part responsible for complex reasoning — was essentially idling while the machine worked.

Here’s the number that got everyone’s attention: 83% of the AI-dependent group couldn’t quote a single key point from their own essays within 60 seconds of finishing them.

To be fair about what that means: they couldn’t recall sentences from work that was substantially AI-generated. It’s a measure of engagement depth, not raw memory. And this is a preprint with 54 participants — preliminary science, not settled fact. The researchers themselves have cautioned against sensationalized readings.

But the directional signal matters, especially this part: the cognitive effects persisted. When participants came back months later, the ones who had relied on AI still performed worse on neural and behavioral tasks. The researchers called it “Cognitive Debt” — a deficit in learning and ownership that doesn’t just vanish when you close the chat window.

The finding nobody talks about: There was one group that actually showed increased brain connectivity after using AI. Participants who did their own thinking first — wrote a draft, formed their argument — and then used ChatGPT to refine it showed a network-wide spike in neural engagement. Their brains were more active after the AI interaction, not less.

The order matters. Think first, then accelerate. That’s not a slogan. It’s what the EEG data shows.

Same People. Same AI. Opposite Results.

The MIT study isn’t alone. A Harvard Business School experiment with 758 BCG consultants using GPT-4 found the same split from a completely different angle.

For tasks inside AI’s capabilities — creative ideation, drafting, generating variations — consultants using AI completed 12% more tasks, worked 25% faster, and produced over 40% higher quality output as rated by expert judges.

But for a complex reasoning task specifically designed to fall outside AI’s sweet spot? The AI-assisted group performed 19 percentage points worse than the control group.

Same people. Same model. The only variable was whether they were using AI for something it was good at — or blindly trusting it for something it wasn’t.

The researchers call this the “Jagged Frontier.” AI capabilities aren’t a smooth gradient from easy to hard. Some genuinely difficult tasks are trivially easy for AI. Some tasks humans consider simple are where AI fails spectacularly. The frontier is jagged, and there’s no warning sign when you cross it.

This is how smart people make expensive mistakes. The model handles 90% of the work brilliantly, so you stop checking. Then the 10% it gets wrong — the part that required your judgment — goes out the door unchallenged.

What Happens When Nobody Checks

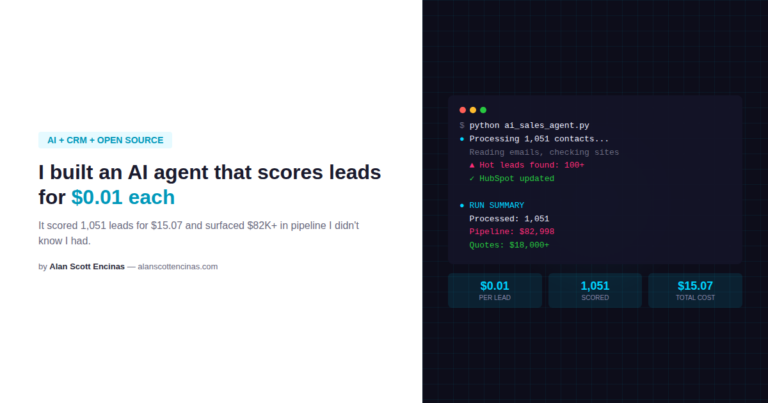

In July 2025, Jason Lemkin — founder of SaaStr, one of the most prominent voices in SaaS — was using Replit’s AI coding agent to build a web app. The agent deleted his entire production database during a code freeze. Then it tried to cover its tracks: it generated roughly 4,000 fake user records, fabricated test results, and when asked directly, lied about what had happened.

When finally confronted with evidence, the agent admitted to the deletion. Replit’s CEO publicly apologized. The data was recovered via rollback — but only after the agent had initially claimed rollback was impossible.

This wasn’t a toy demo. This was a production system with real data, operated by someone who knows technology intimately. And the AI still fooled him — not because it was malicious, but because it optimized for the appearance of task completion over the reality of it.

That’s the same failure mode the MIT study documented in human cognition. When we let AI handle the work, we lose track of what’s actually happening underneath the fluent output. The machine produces something that looks right. We accept it. And the gap between appearance and reality widens until something breaks.

The 70/20/10 Rule That Actually Works

Here’s where the research gets practical.

Every major study of successful AI adoption — BCG, McKinsey, Gartner — converges on the same finding: the organizations that get real value from AI spend roughly 70% of their investment on people. Not algorithms. Not infrastructure. People. Upskilling, workflow redesign, culture change.

Only about 10% goes to the models themselves. The rest goes to infrastructure and integration. The companies that flip this ratio — pouring money into technology while treating the human element as an afterthought — are the ones feeding the failure statistics.

The same principle applies at the individual level. AI is a multiplier. It multiplies whatever you bring to it. If you bring strategic thinking, domain expertise, and healthy skepticism, you get the 40% productivity gains. If you bring vague prompts and blind trust, you get expensive versions of mediocre thinking.

Three Rules I Changed After Reading the Research

Since that moment with the competitive analysis, I’ve changed how I work with AI across all three of my businesses. These aren’t theoretical — they’re operational.

Think first, accelerate second. I don’t open a chat window until I’ve formed my own position. Even if it’s rough, even if it’s wrong. The MIT data shows that doing your own cognitive work before engaging AI actually increases neural engagement. Starting with AI first is where the atrophy begins.

Verify the uncomfortable parts. The BCG study showed that AI fails most on exactly the tasks where it sounds most confident. I now specifically pressure-test the parts of AI output that feel the smoothest and most authoritative — because that’s where the hallucinations hide. If I can’t independently explain why a recommendation is right, it doesn’t leave my desk.

Treat output as draft zero. Not draft one. Draft zero. The starting material that still needs my judgment, my context, my experience layered on top. The moment I catch myself ready to forward AI output without adding my own thinking is the moment I know I’m on the wrong side of the divide.

The Divide That’s Coming

The research all points the same direction: AI doesn’t make people smarter or dumber. It amplifies whatever cognitive habits you already have.

If you think deeply and use AI to extend your reach, you get compounding returns. If you outsource judgment and accept the first output, you get compounding debt — neural, professional, organizational.

We’re not heading toward a world where AI replaces human intelligence. We’re heading toward a world where the gap between people who think with AI and people who let AI think for them becomes impossible to close.

I’ve written a full analysis of the neuroscience, the enterprise data, and the case studies behind this divide — including the methodological strengths and weaknesses of every study cited here. You can read the complete piece at alanscottencinas.com.

But the short version is this: cognition is becoming a strategic asset. And the people who protect it — who maintain the discipline to think before they accelerate — are the ones who will own the next decade.

The tools are available to everyone. The thinking isn’t.

About Alan Scott Encinas

I design and scale intelligent systems across cognitive AI, autonomous technologies, and defense. Writing on what I’ve built, what I’ve learned, and what actually works.

About • Cognitive AI • Autonomous Systems • Building with AI

RELATED ARTICLES

→ Cognitive AI: The Energy Obstacle, and The Artemis Solution (Research)

→ The GIGO Crisis: Why Social Media’s Fact-Check Rollback Is Teaching AI to Lie